Sonic the Hedgehog 3

We’re delighted to announce our involvement in Sonic the Hedgehog 3, now showing in cinemas. Directed by Jeff Fowler, Sonic 3 has been a monumental rigging challenge. At Red9, we developed all the character and facial rigs for the film, ensuring every character’s performance was both expressive and true to the original designs.

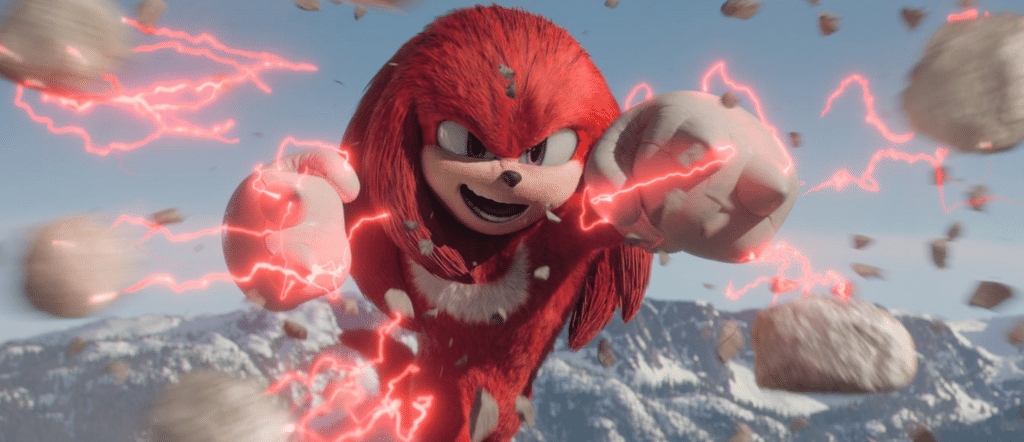

This project demanded an extraordinary amount of effort to achieve the level of performance required for such dynamic characters, all while preserving the integrity of the models. Fortunately, we were able to build upon the foundational work we’d completed for the Knuckles series on Paramount+, giving us a fantastic starting point for the film’s characters.

Due to the scale of the project, Paramount took the unusual approach of centralised the rigging. Instead of each of the 6 key VFX vendors; Rising Sun Pictures, Rodeo FX, ILM, Fin, Marza and Paramount’s in-house team, setting up the characters internally, they received a standardized “delivery package” of assets. This not only streamlined the process but, more importantly, ensured consistency in character design across all shots. It also allowed animation supervisor Clem Yip to distribute key character pose libraries, further guaranteeing seamless performances throughout the film.

With “Knuckles” we had already laid the groundwork, but for the film we had to massively increase the complexity of all the rig control systems. Working closely with Tyson Hesse and Alin Bolcas, we worked tirelessly perfecting the setups to capture each character’s unique personality.

The character team at Paramount wanted to push the facial shapes harder whilst giving the animators greater flexibility to finesse performances. This meant going back to basics and adding entirely new levels of mesh control under the hood. Huge amounts of work was done at the sculpting phase to add an ever increasing number of cross-blends, essential for managing such complex combinations of facial shapes. The brows being particularly difficult for due to their huge range of motion, required by the characters.

Maya Rigs:

The rigs were built entirely using native Maya nodes, a deliberate choice to ensure seamless compatibility across all vendors without relying on custom plugins. We worked tirelessly to optimize their performance, ensuring they evaluated as fast as possible. To achieve this, we layered deformers in carefully structured stacks and employed various advanced techniques to keep the rigs running in real-time, making sure animators could work smoothly and stay productive. Animators interacted directly with the final asset in Maya, meaning what they saw in the Maya viewport was the exact asset exported to the final caches for rendering.

We were also tasked with developing a suite of custom tools for the film, which became part of our Red9 ProPack toolset. This meant that not only did the animators receive consistent rigs, but they also benefited from a unified toolset within Maya. Among the tools we developed were solutions for secondary overlapping animations to handle the characters’ hair spikes, as well as a new animated trails tool to capture the dynamic “fast zips” the characters make across the screen.

In addition to the lead characters, we provided secondary “ball” rigs, used when Sonic and Knuckles zip along, as well as various digital double stand-in rigs for motion tracking, which were crucial for the project.

Sonic the Hedgehog 3 has been an incredible project, and we’d like to extend our heartfelt thanks to VFX director Ged Wright and the entire Sonic 3 team for entrusting us with such a pivotal role in a project of this scale.